A Distributed Data-Parallel PyTorch Implementation of the Distributed Shampoo Optimizer for Training Neural Networks At-Scale

Boris Dayma 🖍️ on X: "We ran a grid search on each optimizer to find best learning rate. In addition to training faster, Distributed Shampoo proved to be better on a large

![PDF] Shampoo: Preconditioned Stochastic Tensor Optimization | Semantic Scholar PDF] Shampoo: Preconditioned Stochastic Tensor Optimization | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/0679950558d72791f16031dd08c39367d8dd47b8/8-Figure4-1.png)

![PDF] Shampoo: Preconditioned Stochastic Tensor Optimization | Semantic Scholar PDF] Shampoo: Preconditioned Stochastic Tensor Optimization | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/0679950558d72791f16031dd08c39367d8dd47b8/8-Figure3-1.png)

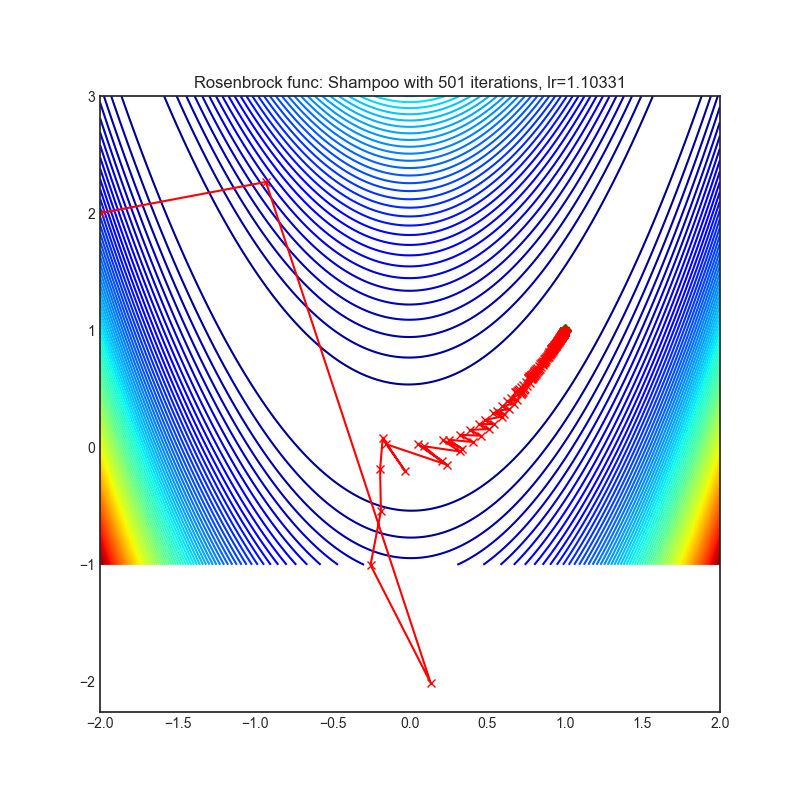

![PDF] Shampoo: Preconditioned Stochastic Tensor Optimization | Semantic Scholar PDF] Shampoo: Preconditioned Stochastic Tensor Optimization | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/0679950558d72791f16031dd08c39367d8dd47b8/2-Figure1-1.png)

![PDF] Shampoo: Preconditioned Stochastic Tensor Optimization | Semantic Scholar PDF] Shampoo: Preconditioned Stochastic Tensor Optimization | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/0679950558d72791f16031dd08c39367d8dd47b8/8-Figure2-1.png)

![PDF] Shampoo: Preconditioned Stochastic Tensor Optimization | Semantic Scholar PDF] Shampoo: Preconditioned Stochastic Tensor Optimization | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/0679950558d72791f16031dd08c39367d8dd47b8/8-Table1-1.png)